Introduction to machine translation (MT) and history of MT. Overview of statistical MT. Beam search for decoding. Introduction to neural machine translation: the encoder-decoder

neural architecture. The BLEU

evaluation score. Performances and recent improvements. Neural MT: the encoder-decoder architecture; Attention in NMT. Closing of the course!

Home Page and Blog of the Multilingual NLP course @ Sapienza University of Rome

Pages

Friday, May 27, 2022

Lecture 23 (26/05/2022, 3 hours): Machine Translation and closing

Lecture 22 (23/05/2022, 3 hours): Semantic Parsing, AMR and BMR (BabelNet Meaning Representation), Natural Language Generation and stochastic parrots

Semantic Parsing, AMR and BMR (BabelNet Meaning Representation), Natural Language Generation and stochastic parrots.

Sunday, May 22, 2022

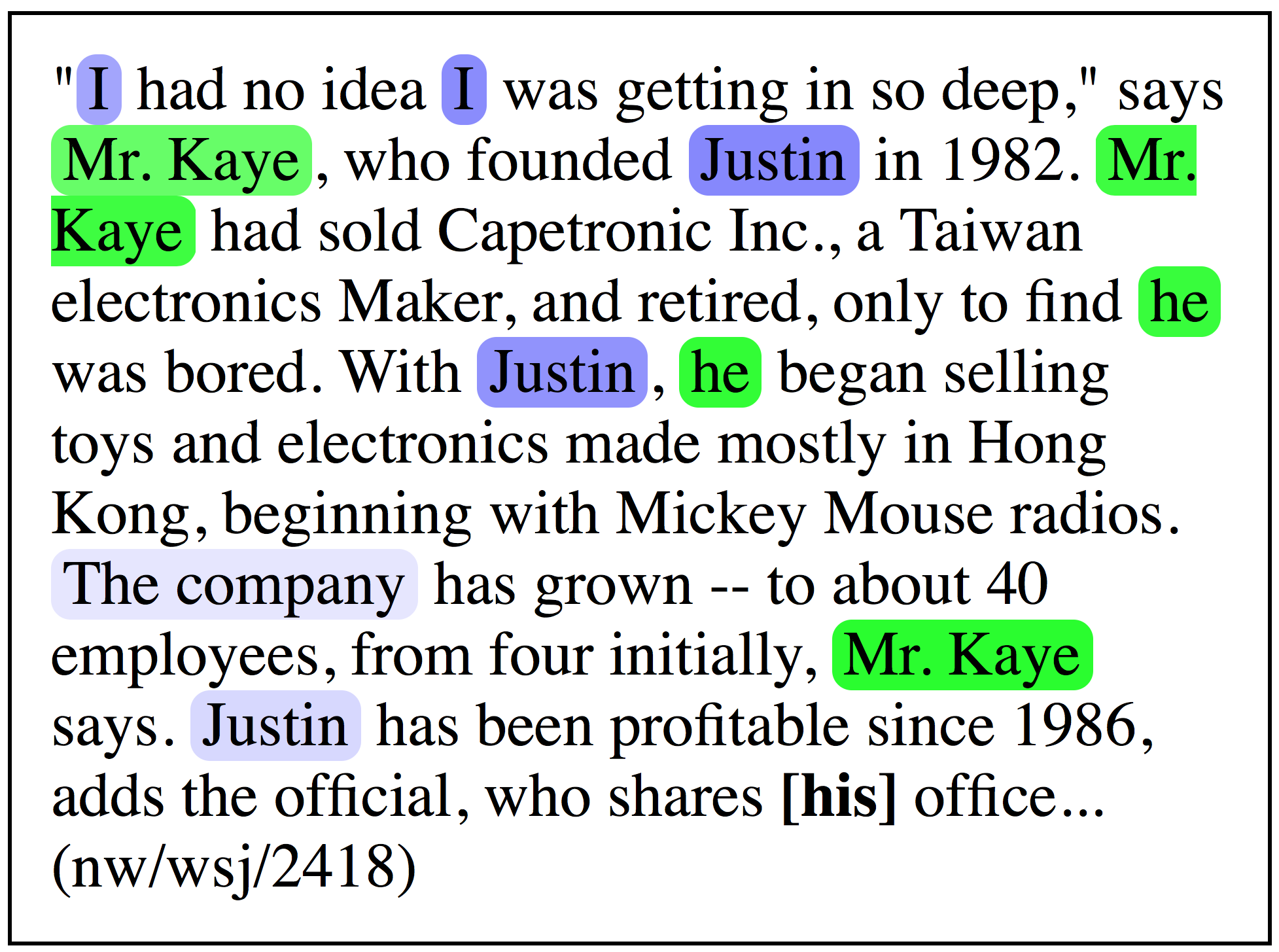

Lecture 21 (19/05/2022, 2 hours): more on Word Sense Disambiguation; co-reference resolution and homework #3

Neural WSD: Transformer-based approaches. Integration of knowledge and neural WSD. Datasets and issues in WSD. Scaling multilingually. Introduction to coreference resolution. Presentation of homework 3.

Monday, May 16, 2022

Lecture 20 (16/05/2022, 3 hours): Word Sense Disambiguation and Semantic Role Labeling + hw2 assignment

Introduction to Word Sense Disambiguation: introduction to the task. Purely data-driven, and neuro-symbolic approaches. Issues. Semantic Role Labeling: introduction to the task. Inventories. Neural approaches. Issues. Homework 2 assignment.

Friday, May 13, 2022

Lecture 19 (9/5/2022, 3 hours): the Transformer architecture

The Transformer architecture. Rationale. Self- and cross-attention; keys, queries, values. Encoder and decoder blocks.

Lecture 19b (12/5/2022, 3 hours): pre-trained Transformer models and Transformer notebook

Pre-training and fine-tuning. Encoder, decoder and encoder-decoder pre-trained models. GPT, BERT. Masked language modeling and next-sentence prediction tasks. Practical session on the Transformer with BERT.